On the GTC 2021, NVIDIA announced its brand new Quantium-2 InfiniBand Networking platform, powered by its Bluefield-3 DPU and Quantum-2 Infiniband switches.

NVIDIA Unveils Next-Generation Quantum-2: InfiniBand Network Platform Powered by BlueField-3 DPU and Quantum-2 InfiniBand Switches

Press release: NVIDIA announced today NVIDIA Quantum-2, the next generation of its InfiniBand network platform, offers the exceptional performance, wide availability and strong security needed by cloud computing and supercomputing vendors.

The most advanced end-to-end network platform ever created, the NVIDIA Quantum-2 is a 400Gbps InfiniBand network platform that consists of the NVIDIA Quantum-2 switch, the ConnectX-7 network adapter, and the BlueField-3 (DPU) data processing module. and all the software that supports the new architecture.

The introduction of NVIDIA Quantum-2 is coming as supercomputing centers are increasingly open to many users, many outside their organizations. At the same time, global cloud service providers are beginning to offer more supercomputer services to their millions of customers.

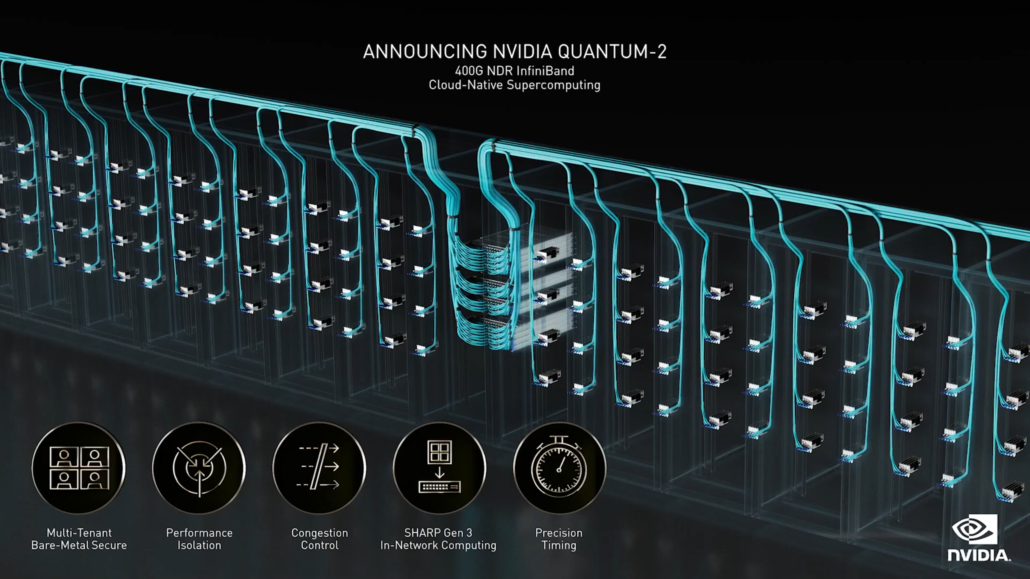

NVIDIA Quantum-2 includes key features needed for demanding workloads performed in both arenas. Loaded with cloud technology, it delivers high performance with 400 gigabits per second of bandwidth and advanced reusable to accommodate many users.

“The demands of today’s supercomputer centers and public clouds are converging,” said Gilad Shiner, senior vice president of networking at NVIDIA. “They need to provide the highest possible performance for the next generation of HPC, AI and data analysis challenges, while safely isolating workloads and meeting different user traffic requirements. This vision of a modern data center is now real with the NVIDIA Quantum-2 InfiniBand. ”

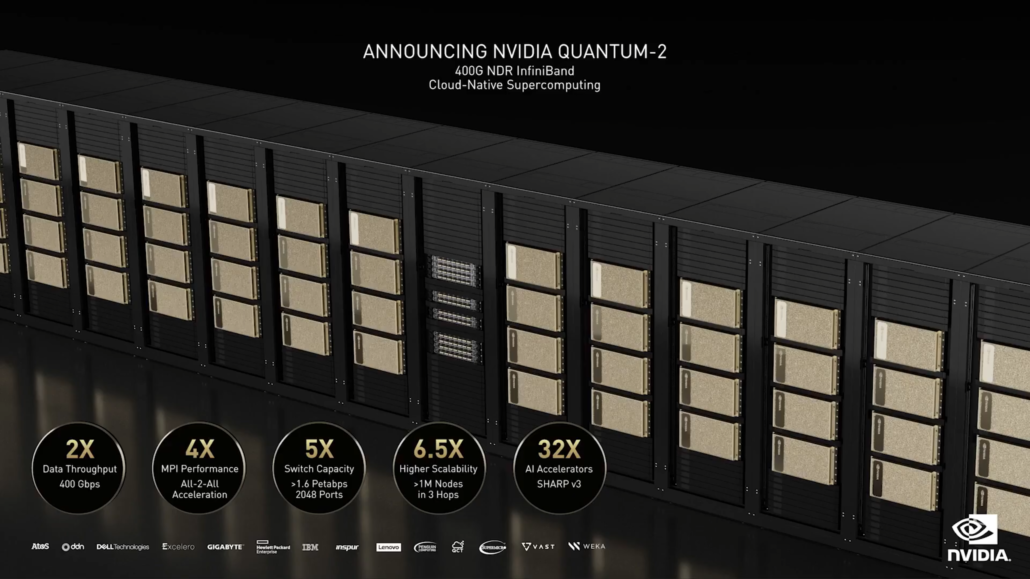

NVIDIA Quantum-2 performance and cloud capabilities

With 400Gbps, the NVIDIA Quantum-2 InfiniBand doubles network speed and triples the number of network ports. It speeds up productivity by 3 times and reduces the need for data center switches by 6 times, while reducing power consumption in the data center and reducing data center space by 7 percent each.

Multi-tenant performance isolation of NVIDIA Quantum-2 prevents one tenant from disturbing others by using an advanced telemetry-based cloud congestion control system with cloud capabilities that ensure reliable bandwidth, regardless of user jumps or requirements. workload.

NVIDIA Quantum-2 SHARPv3 In-Network Computing technology provides 32 times more acceleration engines for AI applications than the previous generation. Advanced InfiniBand data center tissue management, including predictive support, is enabled with NVIDIA UFM Cyber-AI platform.

A nanosecond precision synchronization system integrated into NVIDIA Quantum-2 can synchronize distributed applications, such as database processing, helping to reduce unnecessary latency and downtime. This new feature allows cloud data centers to become part of the telecommunications network and receive software-defined 5G radio services.

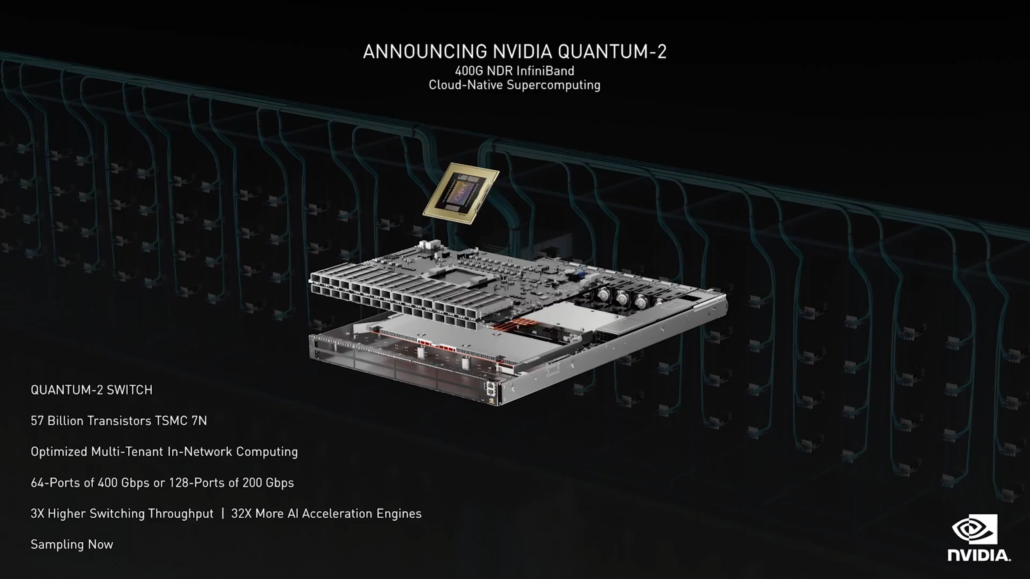

Quantum-2 InfiniBand switch

At the heart of the Quantum-2 platform is the new Quantum-2 InfiniBand switch. With 57 billion transistors on 7-nanometer silicon, it is slightly larger than the NVIDIA A100 graphics processor with 54 billion transistors.

It has 64 ports at 400Gbps or 128 ports at 200Gbps and will be available in various switching systems up to 2048 ports at 400Gbps or 4096 ports at 200Gbps – more than 5 times the ability to switch compared to the previous generation, Quantum-1.

The combined network speed, switching capability and scalability is ideal for building the next generation of giant HPC systems.

The NVIDIA Quantum-2 Switch is now available from a wide range of leading infrastructure and system vendors worldwide, including Atos, DataDirect Networks (DDN), Dell Technologies, Excelero, GIGABYTE, HPE, IBM, Inspur, Lenovo, NEC, Penguin Computers , QCT, Supermicro, VAST data and WekaIO.

Quantum-2, ConnectX-7 and BlueField-3

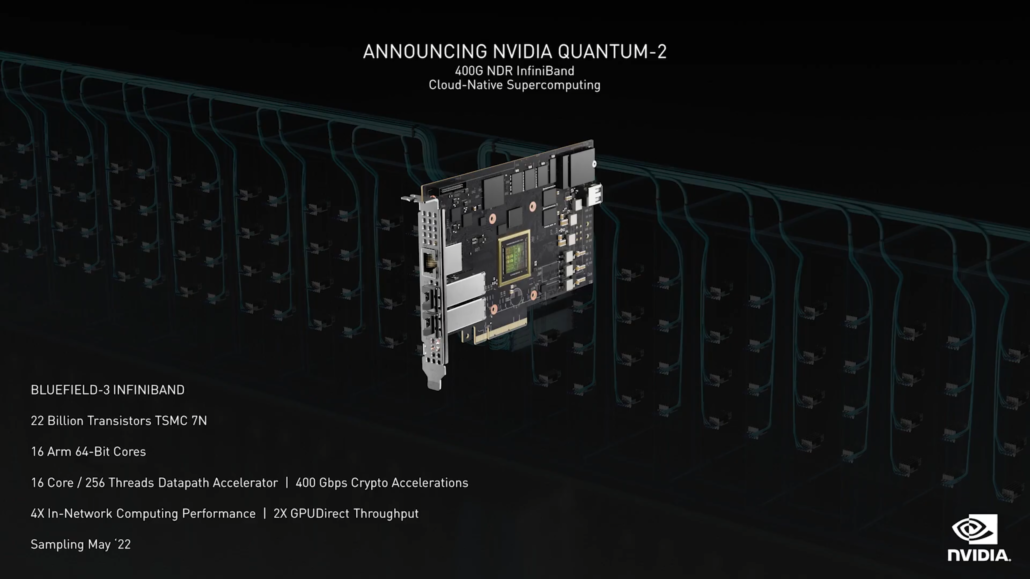

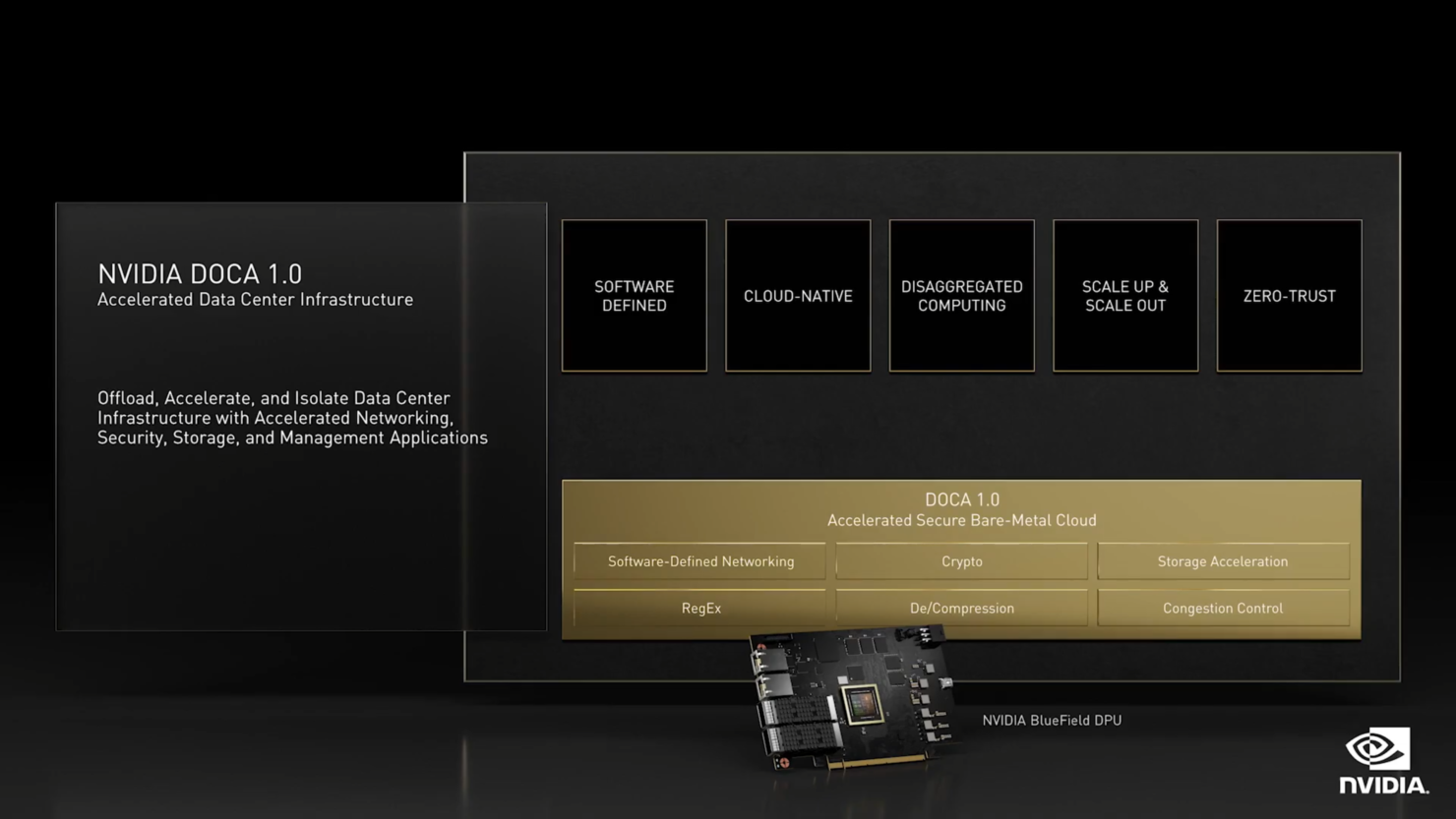

The NVIDIA Quantum-2 platform provides two network endpoint options, the NVIDIA ConnectX-7 NIC and the NVIDIA BlueField-3 DPU InfiniBand.

ConnectX-7, with 8 billion transistors in a 7-nanometer design, doubles the data rate of the currently leading HPC network chip, NVIDIA ConnectX-6. It also doubles the performance of RDMA, GPUDirect Storage, GPUDirect RDMA and In-Networking Computing. ConnectX-7 will be tested in January.

The BlueField-3 InfiniBand, with 22 billion 7-nanometer transistors, offers sixteen 64-bit Arm CPUs for unloading and isolating the data center’s infrastructure stack. BlueField-3 samples in May.